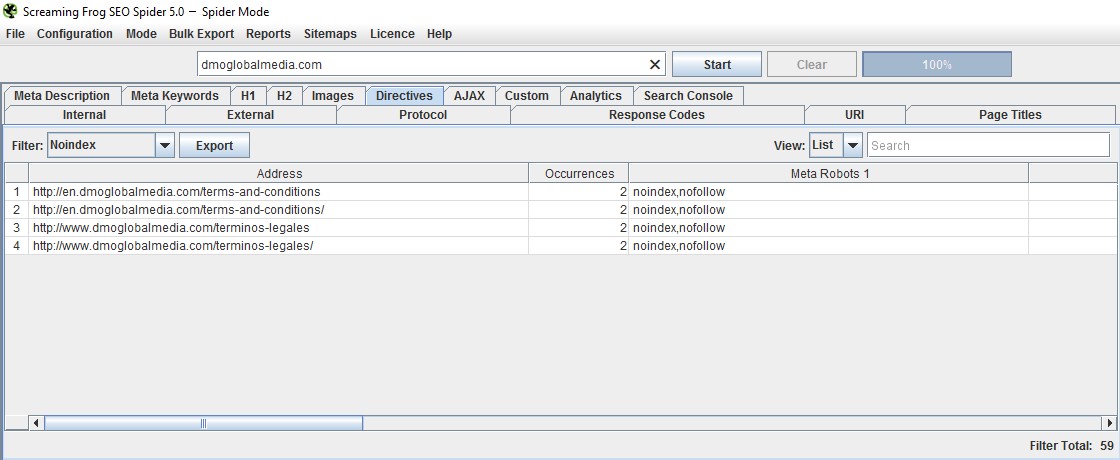

And as you can guess, the tool will specify if noindex is being delivered via the x-robots-tag (via the header response). With Google’s URL Inspection Tool, you can check specific urls to see if they are indexable. There’s nothing better than going straight to the source.

The list below contains tools directly from Google, Chrome extensions, and third-party crawling tools for checking urls in bulk. By adding this to your checklist, you can ensure that important directives are correct and that you are noindexing and nofollowing the right pages on your site (and not important ones that drive a lot of traffic from Google and/or Bing).

How To Check The X-Robots-Tag in the Header Responseīased on what I explained above, I decided to write this post to explain several different ways to check for the x-robots-tag. Imagine checking a site for the meta robots tag, thinking all is ok when you can’t see it, but the x-robots-tag is being used with “noindex, nofollow” on every url. You need to specifically check the header response to see if the x-robots-tag is being used, and which directives are being used.Īs you can guess, this can easily slip through the cracks unless you are specifically looking for it. They are in the header response, which is invisible to the naked eye. Here are two examples of the x-robots-tag in action:Īgain, those directives are not contained in the html code. By using this approach, you don’t need a meta tag added to each url, and instead, you can supply directives via the server response. In addition to the meta robots tag, you can also use the x-robots-tag in the header response. That’s not the only way to issue noindex, nofollow directives. That’s why it’s extremely important to check for the presence of the meta robots tag to ensure the right directives are being used.īut here’s the rub. It could be human error, CMS problems, reverting back to an older version of the site, etc. In other words, it can drop off a cliff.Īnd before you laugh-off that scenario, I can tell you that I’ve seen that happen to companies a number of times during my career. In a worst-case scenario, your organic search traffic can plummet in an almost Panda-like fashion. And when that happens, you can lose rankings for those pages and subsequent traffic. If that happens, and if it’s widespread, your pages can start dropping from Google’s index. For example, if you mistakenly add the meta robots tag to pages using noindex. That’s helpful when needed, but the meta robots tag can also destroy your SEO if used improperly. don’t pass any link signals through to the destination page). It’s one line of code that can keep lower quality pages from being indexed, while also telling the engines to not follow any links on the page (i.e. I have previously written about the power (and danger) of the meta robots tag. The list includes tools directly from Google, Chrome extensions, and third-party crawling tools. The post now contains the most current tools I use for checking the x-robots tag for noindex directives.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed